For this blog post, I wanted to write about one of our implementations of the True North Data Platform (TNDP) that shows how the microservice architecture we moved to several years ago works to meet the needs of our customers.

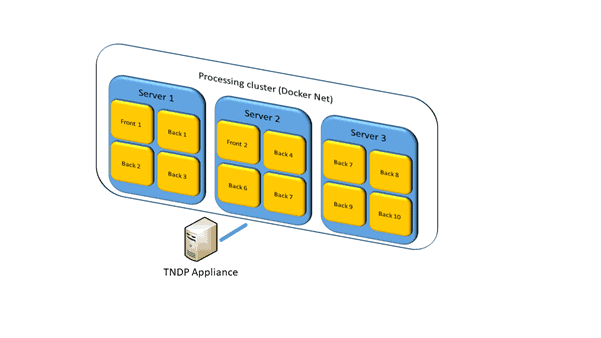

The TNDP is used by data collectors, such as financial regulators, to efficiently collect and process data from external firms. One of the most common configurations is the TNDP used to validate and inspect filings for specific mandates. For some data collectors that wish to validate and inspect filings, they also need to retain data sovereignty over the filings so we offer an appliance that has all the components preinstalled. The data collector can plug this into their network and start using the TNDP Appliance immediately.

One such customer has been using the appliance for CRD IV and approached us about a new mandate. The catch, they were expecting to process roughly 130 million instances a year. Instances would be received in batches of roughly 30 million once a quarter. Would the TNDP Appliance be able to process that many, and how would the TNDP Appliance need to be tuned to process the volume required?

Data Load Details

The customer supplied CoreFiling with several test instances for performance testing. This allowed us to bound the problem a bit. There were 30 million instances to process in a period of 90 days, each one between 2kB and 4kB. The data was to be loaded into our XBRL-aware database (in this case, Oracle) where it is separated into individual data points that can be used with no additional processing. XBRL validation was also required to ensure data integrity.

The customer has about 20,000 larger instances from other mandates to process within the 90-day window, so time must be allowed for that. In the end, we picked 40 days as the target time to process the instances. This allowed time both for other instances to be processed and a restart if something went wrong.

XBRL Performance Testing

Performance testing commenced first with pure validation and increasing numbers of instances starting at 10 instances and working up to 100, 000 this gave us a performance profile to ensure that processing times stayed constant over the range.

With that established, the next step was to run the tests again, including data loading. This did highlight a couple of issues; one with the handling of the Oracle database the other with one of the services that make up the TNDP. The first involved an update to the shredding component and the second involved allocating more memory to the service in Kubernetes. We also scaled up the service so there were two instances of the service running.

The result of the testing was we could consistently process an instance (including all network and database latency) in four seconds or less. This was tested on non-production servers with mid-level hardware specifications, (prior testing showed that production hardware could be up to 7x faster). This meant that a TNDP processing cluster would be required to process the instances within 40 days. That cluster would need 35 True North processors if each one only processed a single instance at a time.

However, it is possible to configure a single True North processor to work in multithreaded mode and process multiple instances at once. There are two parameters we can adjust. The first is setting the ‘count’ for the processor to more than one (1). This allows multiple copies of the processor to run and process more than one instance at a time. The second is to set ‘maxConcurrentSubmissions’ this applies globally and limits the total number of instances that can processed at once. So if this was set to one (1) and the count on the processors was set to two (2) TNDP would still only process one instance at a time. We recommend that the ‘maxConcurrentSubmissions’ is set to a value that is equal to or less than the number of cores in the server.

Deployment

The customer wanted to run the TNDP in Docker containers as they were moving towards microservices and containerisation. So we recommended servers with eight (8) cores then configured TNWSP such that the ‘count’ was set to four (4) and the ‘maxConcurrentSubmissions’ was also set to four (4). Then used Docker to run 10 instances of TNWSP across three (3) servers using Docker compose. The deployment of any size cluster is made very simple with microservice technology.

Once live, this will allow the processing of 35 instances in parallel to process the 30 million instances in the 40-day window. Any concerns the customer had are gone (processing 10x faster than budgeted for) and comfortable that increased volumes in the future can be handled.

Conclusion

In conclusion, TNDP can be used to process very high volume transactional mandates simply through some performance testing and scaling of the microservices.

If you have granular data collections on the horizon, such as AnaCredit or national data requirements, contact our team here.